When a website has only a few pages, search engines can usually crawl and understand it without many problems. But when a website grows into hundreds, thousands, or even millions of URLs, crawling becomes more complex. Search engines need to decide which URLs deserve attention, how often they should visit them, and whether your server can handle repeated crawling.

That is where crawl budget SEO becomes important.

For large websites, crawl budget is not just a technical term. It affects how fast search engines discover new pages, refresh old content, process updates, and understand which sections of your website matter most. If search bots waste time on duplicate pages, broken URLs, filter pages, or thin content, your valuable pages may not get crawled as often as they should.

A strong crawl budget plan helps search engines focus on the right URLs. It supports better indexing, cleaner site structure, and stronger search visibility across Google, Bing, AI search tools, and voice search platforms.

Table of Contents

What Is Crawl Budget SEO?

Crawl budget SEO is the process of helping search engines crawl your most important pages efficiently. It focuses on reducing crawl waste and making sure search bots can access useful pages without getting trapped in unnecessary URLs.

In simple terms, crawl budget is the number of pages search engines can crawl on your website within a certain time. Search engines do not crawl every page with the same frequency. They look at your site quality, server performance, internal linking, content freshness, and page importance before deciding what to crawl.

For large websites, this matters because search engines may not visit every URL quickly. If your site has too many duplicate, outdated, slow, or blocked pages, crawlers may spend time in the wrong areas.

Crawl Budget in Simple Words

Crawl budget means how much attention search engines give to your website during crawling.

If your website has clean URLs, fast loading pages, strong internal links, and useful content, search engines can move through your site more easily. If your website has too many errors, duplicate pages, and weak structure, crawling becomes less efficient.

That is why crawl budget SEO is a core part of Technical SEO for large websites.

Why Crawl Budget Matters for Large Websites

Small websites usually do not face serious crawl budget problems because they have fewer URLs. Search engines can crawl most of their content without much difficulty.

Large websites are different.

They often include product pages, category pages, blog posts, tags, filters, search result pages, paginated URLs, old campaign pages, and parameter based URLs. Without proper control, these pages can create crawl waste. For online stores, strong ecommerce SEO strategies can help manage these URLs properly and guide search engines toward the most valuable product and category pages.

Here are common signs that your site needs better crawl budget management.

Common Large Website Issues

If your website depends on organic traffic, these issues can affect visibility. Your best content may exist, but search engines may not process it quickly enough.

How Crawl Budget SEO Helps Search Engines

Search engines want to crawl useful, fresh, and accessible content. Your job is to make that process easier.

A good crawl budget SEO plan helps search engines:

This is especially useful for ecommerce websites, news sites, real estate portals, job websites, SaaS documentation websites, and large blogs.

Crawl Budget Factors You Should Understand

| Crawl Budget Factor | Meaning | SEO Impact |

|---|---|---|

| Crawl capacity | How much Googlebot can crawl without slowing your server | A faster, stable server can support better crawl activity |

| Crawl demand | How much Google wants to crawl your pages based on freshness, value, links, and popularity | Important and updated pages may be crawled more often |

| Crawl efficiency | How well crawlers reach valuable URLs without wasting time | Helps large websites improve discovery and indexing |

Signs Your Website Has Crawl Budget Problems

You may not notice crawl budget issues immediately. Many websites keep publishing pages without checking whether search engines are crawling them properly. Tracking SEO metrics KPIs can help you identify crawling, indexing, and visibility problems before they affect organic performance.

Here are common signs that your site needs better crawl budget management.

Important Pages Are Not Getting Indexed

If important service pages, category pages, or blog posts are discovered but not indexed, search engines may not be getting enough quality signals. This can happen when your website has too many low value URLs competing for crawl attention.

New Pages Take Too Long to Appear

Large websites often publish new content regularly. If new pages take weeks to appear in search results, crawl efficiency may be poor.

Google Crawls Duplicate URLs

Duplicate URLs can come from filters, tracking parameters, pagination, session IDs, and sorting options. When search bots crawl these versions repeatedly, they spend less time on valuable pages.

Crawl Reports Show Too Many Errors

A large number of 404 errors, soft 404 pages, blocked URLs, and server errors can reduce crawl quality. These signals tell search engines that your site structure needs cleanup.

XML Sitemap Contains Poor URLs

Your sitemap should guide search engines to your best pages. If it includes redirected URLs, noindex pages, broken pages, or duplicate pages, it can confuse crawlers.

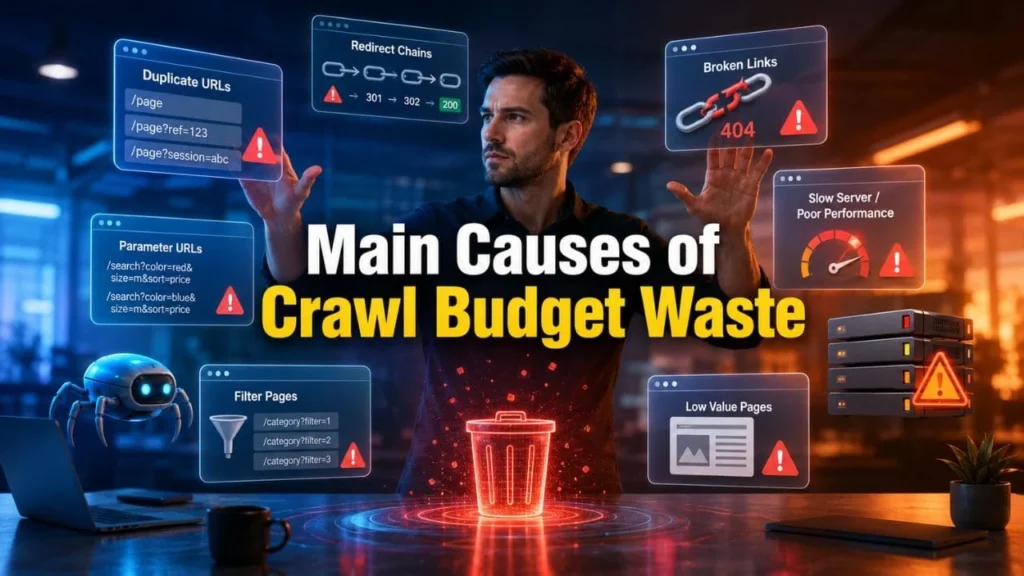

Main Causes of Crawl Budget Waste

Crawl budget waste happens when search engines spend time on URLs that do not help your organic visibility. It can also become worse when multiple pages target the same search intent, which is why reviewing a keyword cannibalization fix guide can help you clean duplicate ranking signals and improve crawl focus.

Here are common signs that your site needs better crawl budget management.

Poor Site Architecture

A weak website structure makes crawling harder. If important pages are buried too deep, search bots may not reach them often.

For better crawling and indexing, large websites need strong technical SEO for large websites so search engines can reach important pages without wasting crawl activity.

Your important pages should be reachable within a few clicks from the homepage or main category pages. A clean structure also helps users and AI search systems understand your website better.

Duplicate and Similar URLs

Large websites often create multiple versions of the same page. This can happen because of:

When search engines crawl all these versions, they may miss more important content.

Faceted Navigation

Faceted navigation is common on ecommerce and listing websites. It allows users to filter products by price, color, size, brand, rating, and availability.

While this helps users, it can create thousands of URL combinations. If not handled properly, crawlers may waste time crawling endless filter pages.

Slow Server Response

If your server responds slowly, search engines may reduce crawl activity to avoid putting pressure on your site. Fast hosting, clean code, caching, image optimization, and Core Web Vitals improvement can support better crawling.

This is where Technical SEO becomes valuable. Businesses managing thousands of URLs should combine crawl budget SEO with technical SEO and Core Web Vitals optimization to improve crawl efficiency, page experience, and search visibility.

Broken Links and Redirect Chains

Broken links waste crawler time. Redirect chains create unnecessary steps before search bots reach the final URL.

For example, if Page A redirects to Page B and Page B redirects to Page C, search bots need extra crawl effort. The better option is to link directly to the final URL.

Benefits of Crawl Budget SEO

A well planned crawl budget SEO process gives large websites better technical control and stronger search performance.

Key Benefits

Crawl budget does not directly guarantee higher rankings. But it helps search engines access, process, and refresh your best pages more effectively. That can support stronger organic performance over time.

Crawl Budget SEO Strategy for Large Websites

A good strategy should not focus only on blocking pages. It should improve the full crawl journey across your website.

Audit Crawl Stats in Google Search Console

Google Search Console gives useful crawl data. Review the Crawl Stats report to understand how Googlebot interacts with your website.

Check:

This data helps you find whether bots are crawling useful pages or wasting time on low value areas.

Analyze Server Log Files

Server log analysis shows what search bots actually crawl. This is very useful for large websites because standard crawling tools may not show real bot behavior.

Review:

Log files help you find crawl waste that may not be visible from the front end.

Clean Your XML Sitemap

Your XML sitemap should include only important, indexable, canonical, working URLs. It should not become a storage area for every page on the site.

Include:

Remove:

For large websites, create separate sitemaps by page type. This makes tracking easier.

Improve Internal Linking

Internal links guide search engines to your most important pages. They also help users find related content naturally.

Use internal links to connect:

For Dakshraj Enterprise, internal linking can connect blog content with Digital marketing services, SEO services, Technical SEO, Local SEO services, and social media marketing services in a natural way.

Use Robots.txt Carefully

Robots.txt helps control crawler access to certain areas of a website. It can be useful for blocking unnecessary crawl paths, but it should be used with care.

A clean robots.txt configuration for crawl control helps search engines avoid unnecessary URL paths and focus more on useful pages.

Use robots.txt for areas such as:

Do not use robots.txt as a method to hide private information. Also, do not block pages that search engines need to crawl for canonical signals.

Control Faceted Navigation

For ecommerce and large listing websites, faceted navigation needs a clear crawl strategy.

You can manage it with:

The goal is not to block every filter page. Some filter pages may have search demand. The goal is to allow useful pages and control unnecessary combinations.

Fix Redirect Chains and Broken URLs

Redirect chains and broken pages waste crawl effort. They also create a poor user experience.

Fix these issues by:

A clean URL path helps crawlers move faster and understand your content better.

Improve Page Speed and Core Web Vitals

Crawl budget and performance are connected. A faster website can help search bots crawl more smoothly, and a regular Core Web Vitals audit can identify speed, loading, and usability issues that may affect crawl efficiency.

Improve:

This also supports users, conversions, SEO services, and broader Digital marketing services.

Crawl Budget Optimization Checklist

| Task | Why It Matters | Tool to Use |

|---|---|---|

| Check Crawl Stats | Finds crawl spikes, errors, and slow response issues | Google Search Console |

| Review XML Sitemap | Helps crawlers find clean canonical URLs | GSC, Screaming Frog |

| Fix 404 Errors | Stops crawl waste on broken pages | Screaming Frog, GSC |

| Reduce Redirect Chains | Sends crawlers directly to final pages | Screaming Frog |

| Control Faceted URLs | Prevents endless crawl paths | Robots.txt, Canonical Tags |

| Improve Server Speed | Supports better crawl capacity | PageSpeed Insights, Logs |

| Strengthen Internal Links | Helps important pages get crawled faster | Site crawl tools |

| Remove Thin Pages | Improves crawl quality and index quality | Content audit |

Common Crawl Budget Mistakes to Avoid

Many websites lose crawl efficiency because of avoidable mistakes.

Avoid these issues:

These issues can make search engines spend crawl time on pages that do not support your ranking goals.

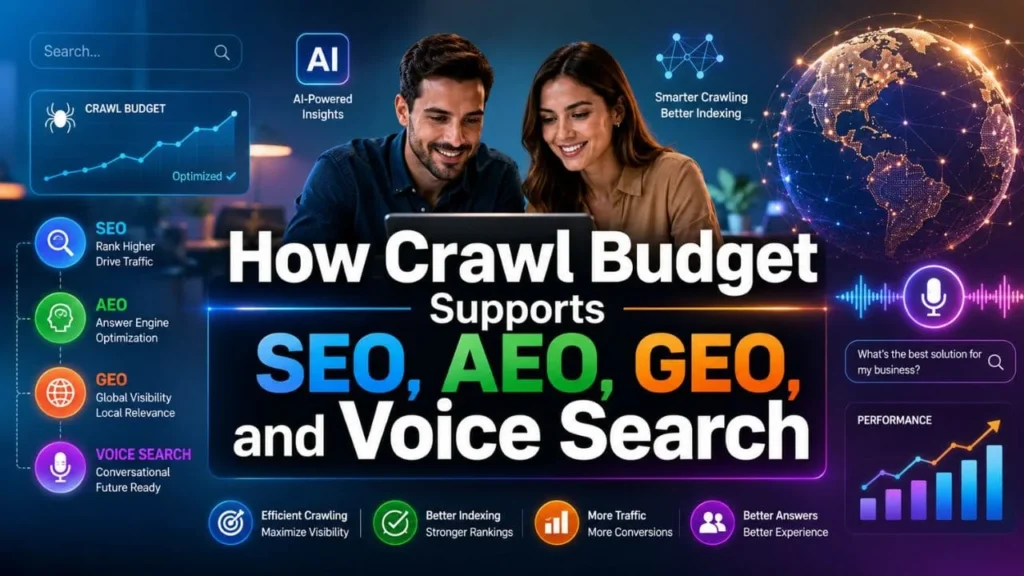

How Crawl Budget Supports SEO, AEO, GEO, and Voice Search

Modern search is not limited to traditional blue links. People now use search engines, AI answer tools, and voice assistants to find information.

Your website must be easy to crawl, understand, and quote.

SEO Benefit

For search engines, clean crawling helps important pages get discovered and updated faster. This supports ranking potential across service pages, blogs, categories, and resource pages.

AEO Benefit

Answer Engine Optimization depends on clear, direct, and structured information. When crawlers can access your best content, answer engines can understand your content more easily.

GEO Benefit

Generative Engine Optimization depends on topic clarity and entity relationships. Strong internal links, structured content, schema markup, and clean crawl paths help AI systems understand your expertise.

Voice Search Benefit

Voice assistants prefer clear answers. A strong crawl budget SEO strategy makes it easier for search engines to process direct answers, FAQs, and structured sections.

For example:

What is crawl budget SEO?

Crawl budget SEO is the process of helping search engines crawl the most important pages of a website efficiently.

Why is crawl budget important for large websites?

It matters because large websites often have many URLs, and search engines need help finding the pages that deserve attention.

Best Practices for Crawl Budget SEO

Use these best practices to make your website easier to crawl and understand.

These practices work well with Technical SEO, Local SEO services, social media marketing services, and complete Digital marketing services because they improve how users and search engines move through your site.

Final Thoughts

Large websites need more than content publishing. They need a clean crawl path, clear site structure, fast performance, and strong internal linking.

Crawl budget SEO helps search engines spend more time on your important pages and less time on duplicate, broken, or low value URLs. It supports faster discovery, better indexing, improved technical health, and stronger visibility across search engines, AI tools, and voice search platforms.

For websites with many URLs, crawl budget should be reviewed regularly. When you combine clean sitemaps, smart robots.txt rules, strong internal linking, fast page speed, better content quality, and a proper web development checklist for SEO ready sites, your website becomes easier for search engines to crawl and easier for users to trust.

FAQs

What is crawl budget SEO?

Crawl budget SEO is the process of improving how search engines crawl your website so they can find and process your most important pages efficiently.

Why is crawl budget important for large websites?

Crawl budget is important for large websites because they often have many URLs. Without proper control, search bots may waste time on duplicate, broken, outdated, or low value pages.

How do I check crawl budget issues?

You can check crawl budget issues through Google Search Console Crawl Stats, Page Indexing reports, XML sitemap reports, URL Inspection, and server log analysis.

Does robots.txt improve crawl budget?

Robots.txt can help manage crawl activity by blocking unnecessary URL paths. However, it must be used carefully because blocking the wrong pages can hurt crawling and indexing.

Can crawl budget affect rankings?

Crawl budget does not directly guarantee rankings, but poor crawling can delay indexing and reduce how often search engines refresh important pages. This can affect organic visibility.

How can ecommerce websites improve crawl budget?

Ecommerce websites can improve crawl budget by controlling faceted navigation, cleaning XML sitemaps, fixing duplicate URLs, improving internal links, removing thin pages, and improving server speed.

Is crawl budget only important for Google?

No. Crawl efficiency matters for all search engines. It also supports AI search tools and voice search platforms because they need clean, accessible, and well structured content to understand your website.

How often should crawl budget be reviewed?

Large websites should review crawl data every month. Websites that publish many pages or change URLs often should check crawl activity more frequently.